This thread has been broken out of a separate thread regarding the Femto Bolt sensor to address some of the other topics raised there.

@Terence - Before getting into the specific topics mentioned, I want to roll up some of the information from the other thread and recap what your success criteria are (please correct me if I am misrepresenting anything):

- Reliable, sustained capture of 10 Azure Kinects, each set to 1440p color and 640x576 NFOV depth, with your ‘fixed’ studio PC, containing an Intel i9-10980XE CPU and RTX A5000 (Ampere-gen) GPU.

- Reliable, sustained capture of 7-8 Azure Kinects, each set to 1440p color and 640x576 NFOV depth, with your Dell Precision 7780 mobile workstation PC, containing an Intel i9-13950 HX CPU and RTX 5000 (Ada Lovelace-gen) GPU.

- Reliable, sustained capture of 3-5 Azure Kinects, each set to 2160p color and 640x576 NFOV depth, with your backup mobile PC, containing an unknown CPU and RTX 3060 Ti (Ampere-gen).

Topics in order of when they come into play in the Depthkit Studio workflow:

Hardware for 10x1440p Capture: It’s unclear which of your systems is experiencing dropped frames or other performance issues. The spec’s of your tower indicate that the Ampere-gen GPU is likely the bottleneck for 10x1440p capture - The new Ada Lovelace generation of GPU has unlocked greater capture performance through the re-introduction of additional NVENC hardware resources, but Turing- and Ampere-gen GPU’s are more limited. The spec’s of your Dell Precision 7780 mobile workstation look up to task, but we have seen performance affected by thermal throttling, particularly in laptop form factors before. In general, we try to target 0 frames dropped at all, and our Depthkit Studio Hardware systems are rated for such. Tolerance of dropped frames is up to you based on how bothersome the stutter of a dropped frame is within your project’s context.

Sensor Positioning: Though closer sensors to your subject result in higher resolution, 50cm (0.5m) is the minimum distance that can be detected by the sensor, and placing the sensors at exactly that distance jeopardizes that some parts of your subject may come too close to the sensor to be captured (due to overexposing the depth camera).

As the distance between the sensors and the subjects also defines the size of the capture volume, the sensors usually need to be placed at a distance that frames the subject fully in any position in the capture volume - This usually works out to about 1.5m from the nearest edge of the desired capture volume to the sensor to capture a subject of average height.

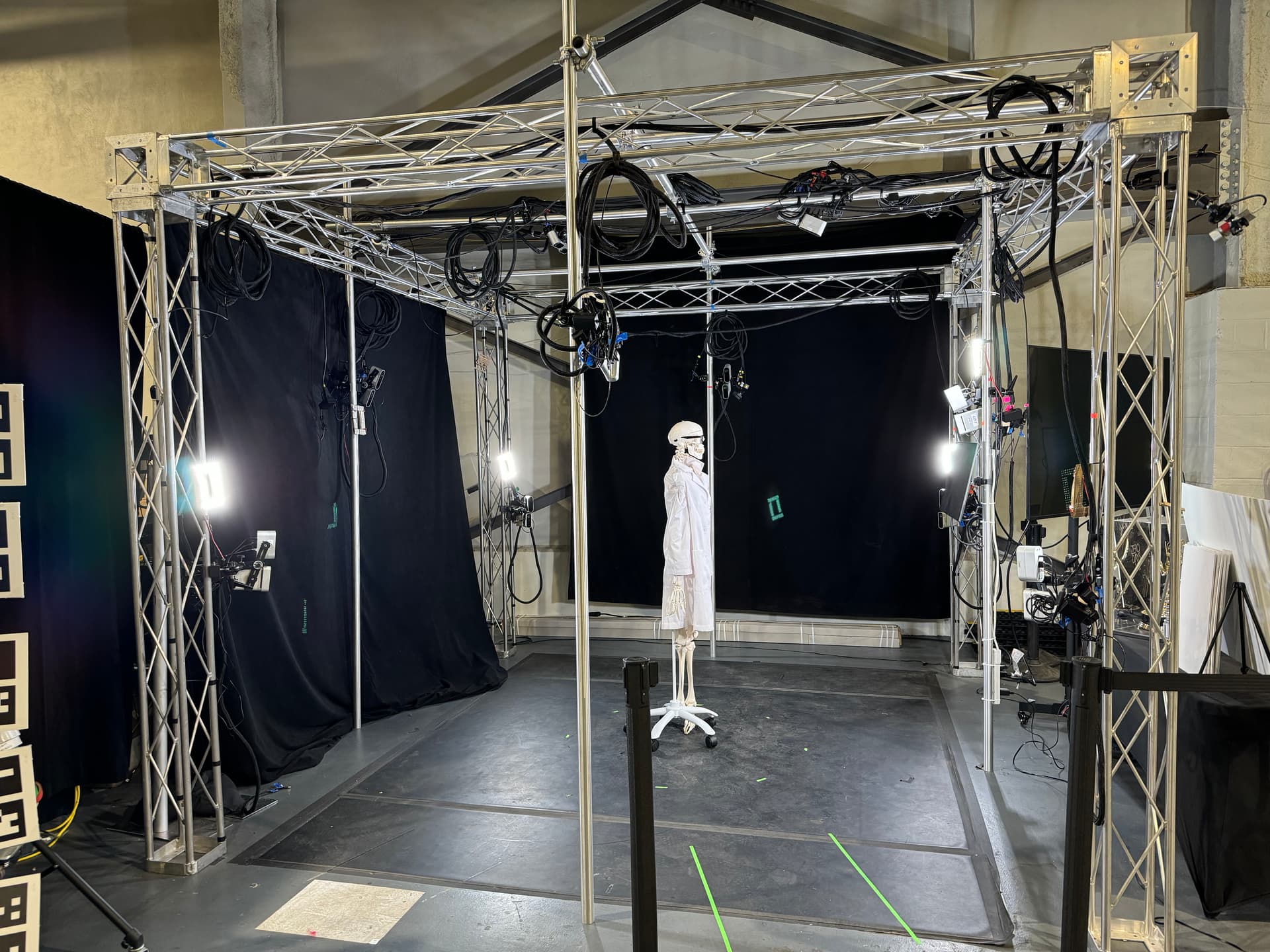

Our latest 10-sensor captures have been arranged in such a way that resembles the 5-sensor array in our documentation, but with 3 additional sensors mirroring the hero position on the remaining 3 sides of the volume, and 2 more overhead (see above photo, with one Kinect and one Bolt in each position for side-by-side testing).

Lighting: In general, it is best to set proper lighting and proper sensor exposure settings at the time of capture, as “pushing” exposure of the encoded RGB data is limited due to the data coming off the sensors and how Depthkit compresses it.

Surface Detection with ToF Sensors: The sensor’s inability or partial ability to capture different hair textures is covered in our material/garment reflectivity documentation. We have found that the most effective solution is to have dry shampoo (available in different tints for different hair colors) on hand and apply to hair and other surfaces (perhaps even body paint) as needed. If using aerosols like dry shampoo, spray them well away from the stage where the particles may interfere with the sensors. The color of the surface doesn’t explicitly interfere with any part of the capture, but dark materials may be more likely to absorb infrared signals just as they absorb visible light, making them more challenging to detect with the sensors.

Capturing with Different Color Resolutions per Sensor: If your system is unable to maintain capture at a particular specification without dropping frames, you can reduce the color resolution of individual sensors to maintain the performance of the system overall. Details of the higher-resolution sensors will be retained, particularly in the combined per pixel image sequence and textured mesh sequence export formats.

Object/Background Removal: As of Depthkit v0.7.0 released in July 2023, Depthkit Studio no longer uses mattes to remove extraneous depth data from Depthkit Studio captures. This was done to speed up the post production process by implementing a new fusion algorithm and a bounding box within the edit stage. While experimental workflows exist to mask objects from the combined per pixel video export, current best practices are to remove any object from the capture volume which you don’t wish to be embedded within the capture. We recognize there are some scenarios where object removal is necessary, and are open to discussing your use cases further to prioritize this functionality in future releases of Depthkit.

Arcturus Integration: Depthkit Studio captures can be exported in Textured Mesh Sequnce (OBJ) format, which vary in size depending on the Mesh Density specified in Depthkit’s Editor > Mesh Reconstruction settings - Higher density reulsts in higher quality at the expense of storage needed. These OBJ’s are immediately ready to import locally into the Arcturus HoloEdit desktop application, which then facilitates data transfer and cloud processing within the application. Any support for different playback devices like iPhones, Android devices, and HoloLens is subject to Arcturus’ HoloSuite platform support.