Hi Jillian,

My goals are twofold with the scene we’re planning to capture.

- One for a 360 VR film (stereoscopic) which post/vfx is in AE.

- A VR/ AR volumetric piece that is navigable. AR via arcore, and later if the gods favor us third world developers and give us a Hololens/magic leap then we’ll look at optical see-thru AR.

Getting back, yes my concern is with importing an Obj sequence into AE that will not be a simple index captured person. It’s a Bed with person on it - so there will be some background, but it fits well with “slice of life” style for the promo in VR (and ar) as well as the aesthetic we are aiming for in the AE video VR film (perspective of a ghost watching someone on a bed)

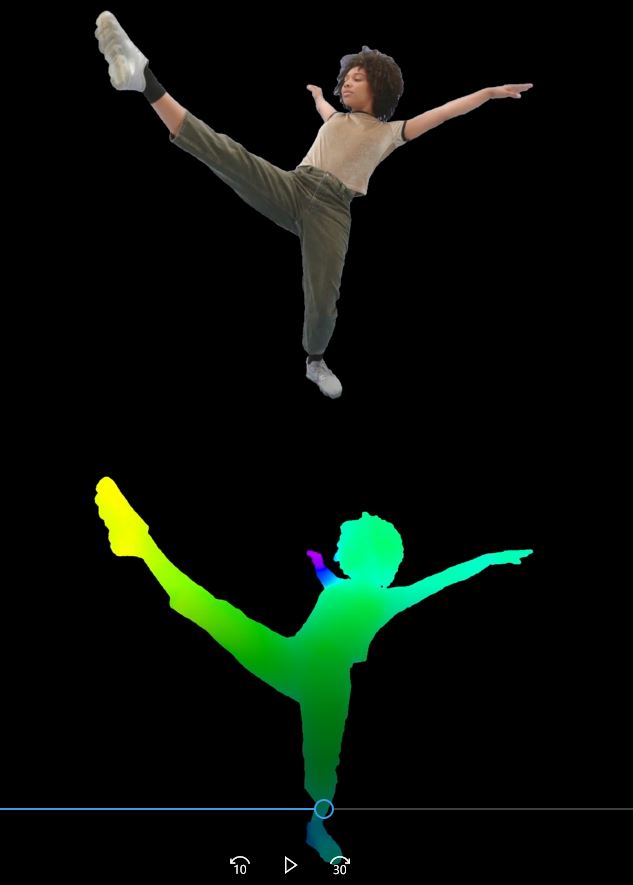

In looking at a Depthkit - to - Grayscale and AE displacement filter, the idea was to be able to give some dimensionality to the bed / person on it.

tweaking the displacement value of the built-in AE displacement map filter, to generate left/right comp view for stereo,

I can also imagine such a workflow would be great even for 2D filmmaking where subtle AE Camera movement would reveal/expose the dimensionality of a Depthkit capture which otherwise (unless imported as OBJ seq) is pretty pointless (no pun!) for VFX work in AE.

I’m worried about the huge filesizes that may occur when importing a 30 sec Obj seq such as that of a bed and part of the background roon -

Granted, everthing is still in planning stage right now for the shot, and I’ve not tested AE’s workflow for obj seq import in either Element 3D, or Plexus or Trapcode etc…

Maybe one of these has a robust way of handling things at render time.

Having a way to convert Depthkit gradient to standard grayscale depth map and then into AE’s displacement effect filter would allow smooth complex workflows in 2.5 D.