hey @CoryAllen and @James

As I only did to now some very few first recordings, I can say, I am amazed about the performance and the speed of the workflow.

I am working with 8 azure sensors on my second slower system in which I transfered my A4000 and my first impression is it will work two. 🙂 what is amazing !!!

During the first hours I had same fresh thoughts for operating depthkit studio and what maybe will find a way into future version of depthkit

here my workflow thoughts:

-

in the editor tab changes in finetuning in texture blend and surface

→ a Undu/redo button could be usefull … -

A framedrop history for each recording → After Recording is finished (just to double check)

for example to find the frame or if it was a longer recording and you see at that frame was

on sensor 4 an dropout … that you can decide to split and record from a particluar moment one

more time instead of full one more time -

In the calibration tab : calibration sensor links → A button for “show only linked” switch on and off

option -

sensor names are not visable in the assambeld stage when i am not at sensor link sub function

… but for depth range changes in the record tab it would be helpfull too, as I have the impression

I win performance when i take away the full view to limited depth range -

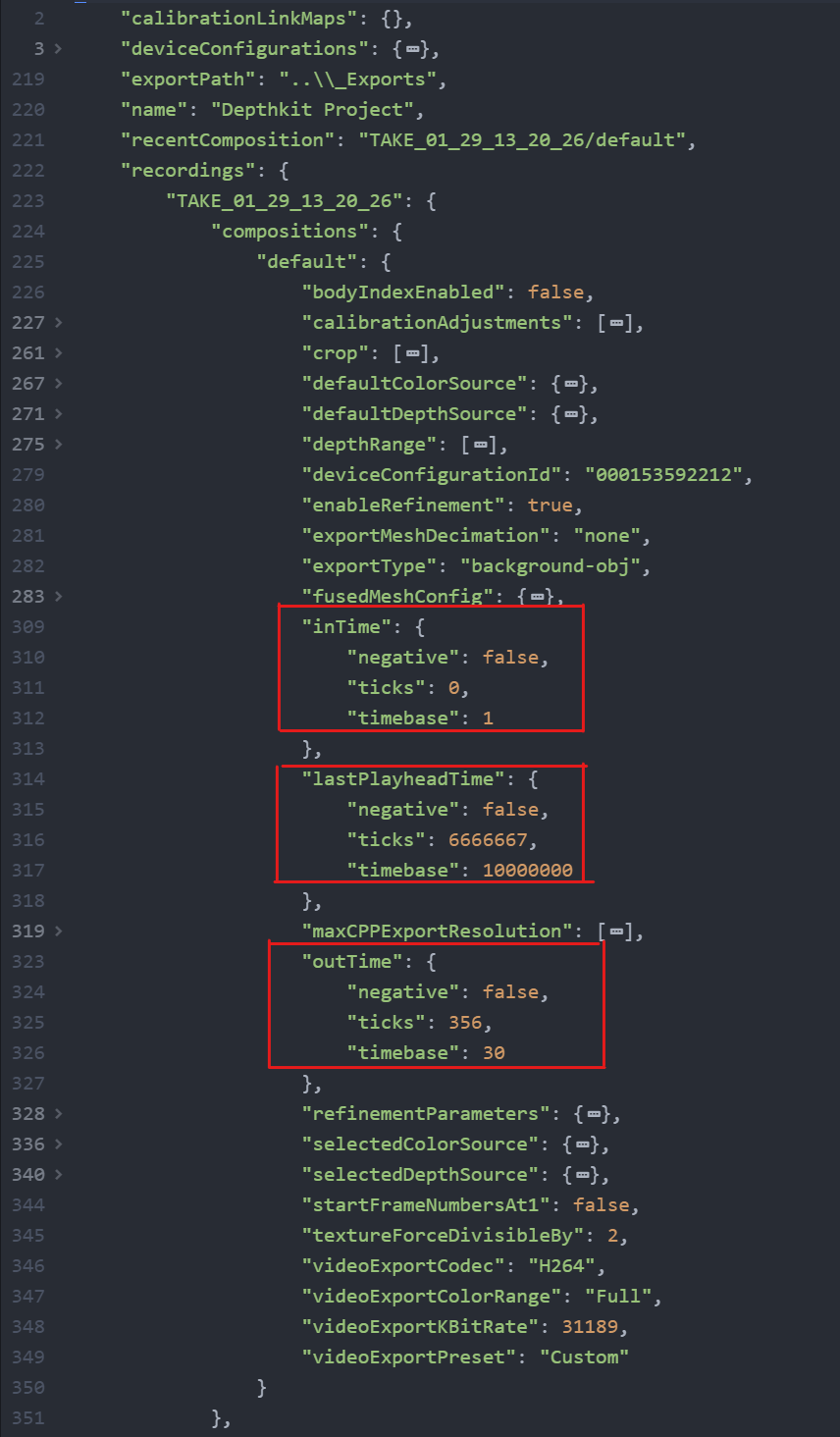

As mention I tested during calibration to limit the depth range (before in recording tab) but after

recording and tuning o the bouncing box I see all recording area full again?

→ means limitation of depth doesnt work during recoridng but it helps somehow for performance

→ I have at least the feeling!

→ If I limit the depth range additionally to the boundingbox then I have a smoother recording

process, but as I sad during recording it is back … ? This is a bit confusing as it has an impact,

at least it feels like. -

An option for original azure RGB color matching would be nice !!! Means shooting a color card

during calibration processWith for example the Datacolor SpyderCHECKR 24

then like for example in davinchi with the presented function of some squres then draged

over the chart in the footage and then creating a cube file for each sensor …

that the skin tones are nicely fitting into each otherexample 1: https://www.youtube.com/watch?v=2VwR9QubmtE

at the moment without this function I would take the original recordings an do the job inside of

davinchi and export same format same length same clip and replace the original one .example 2: https://www.youtube.com/watch?v=DTeowVGOcGQ

-

for surface and texture finetuning a button back to zero changes … or back to automated offer

-

the grouping of the editing tab is very nice but it would be nice to be able to open the group and

maybe switch one sensor of or do after recoridng depth range limitations if not before applied. -

for the bounding box function I have an inspiration from EFEVE … It was possible to create several

bounding boxes, so you could cut out an object from inside of the bounding box

(detailed remark: here in efeve it was needed to flip the removale function of the second or third

bounding box to be functional inside of the main bounding box or a sub box.

OK thats for the moment:

I will test on …

I love the new version in general and I look forward to start to pla with my test recordings inside of unity.

greetings Martn