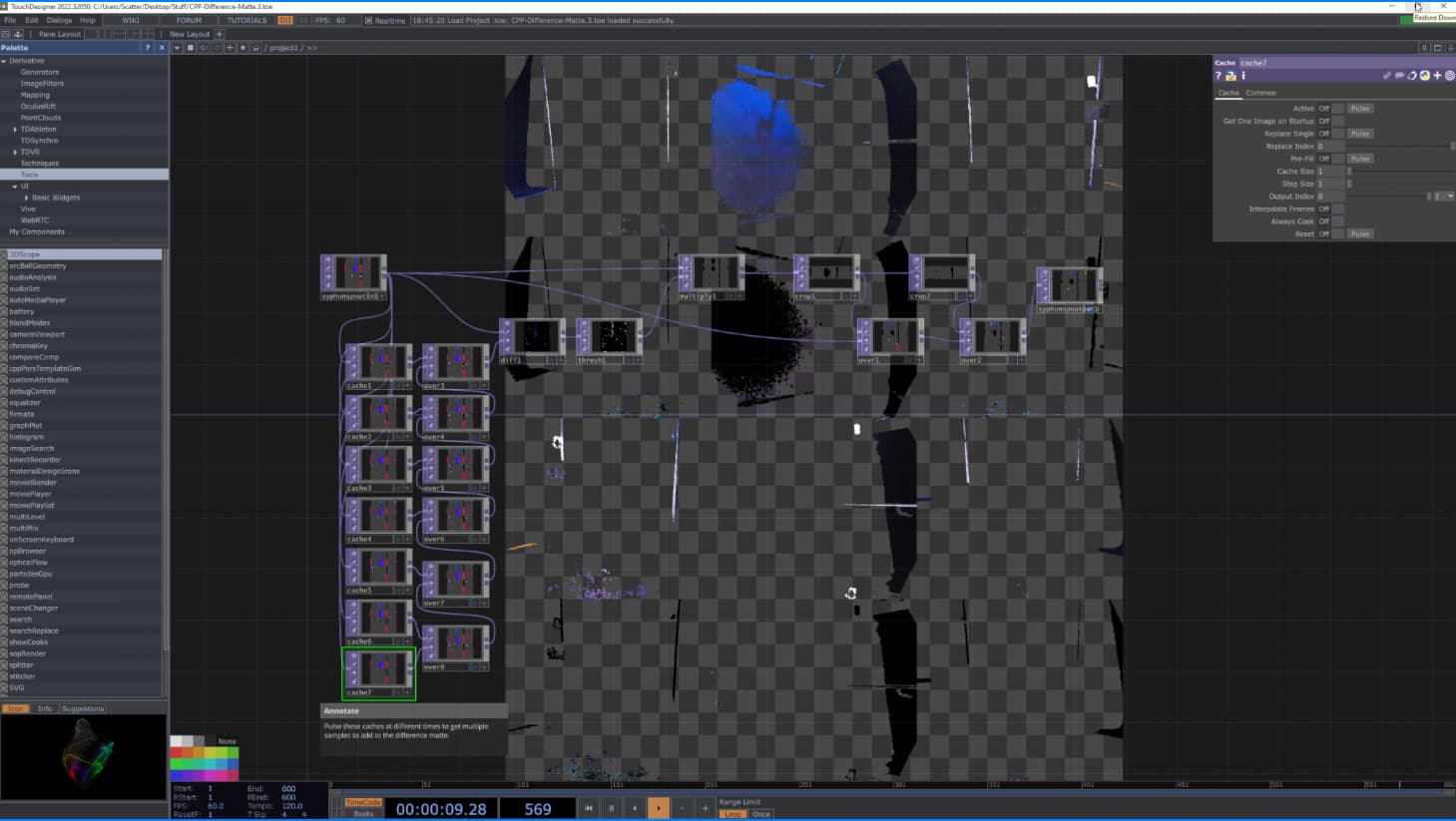

We have been working with the new livestreaming setup over the past month; we’ve got everything up and running, and streaming to a Quest Pro headset. However, we haven’t been able to achieve the same visual quality as seen in your video: Depthkit Livestreaming Tutorial - Part 3: Peer-to-Peer Livestreaming with WebRTC - YouTube (the clip in the beginning with both of you, and also segment towards the end where Cory steps into the volume during the walkthrough).

In our setup, the system is picking up walls, floors etc. but we noticed that in your videos you have none of these issues. Any advice on how to achieve this on our end? We are aware of the limitations of the Lite Renderer and would also love to hear about anything in the works that might help us out.

Thanks!