@BryanDunphy Thanks for sharing all of this material.

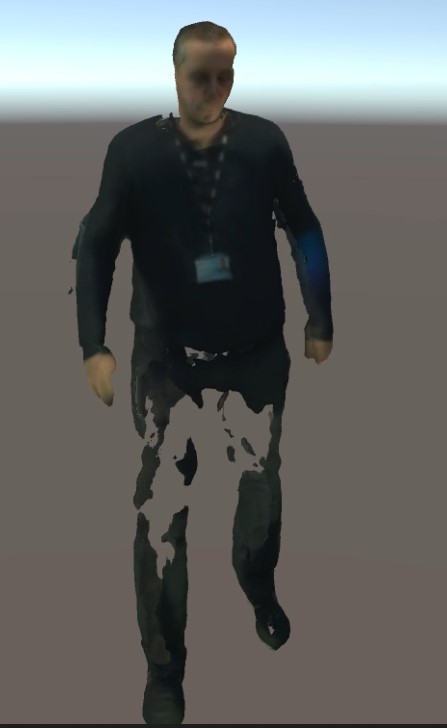

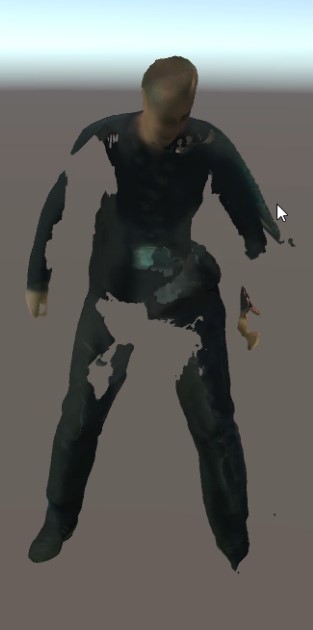

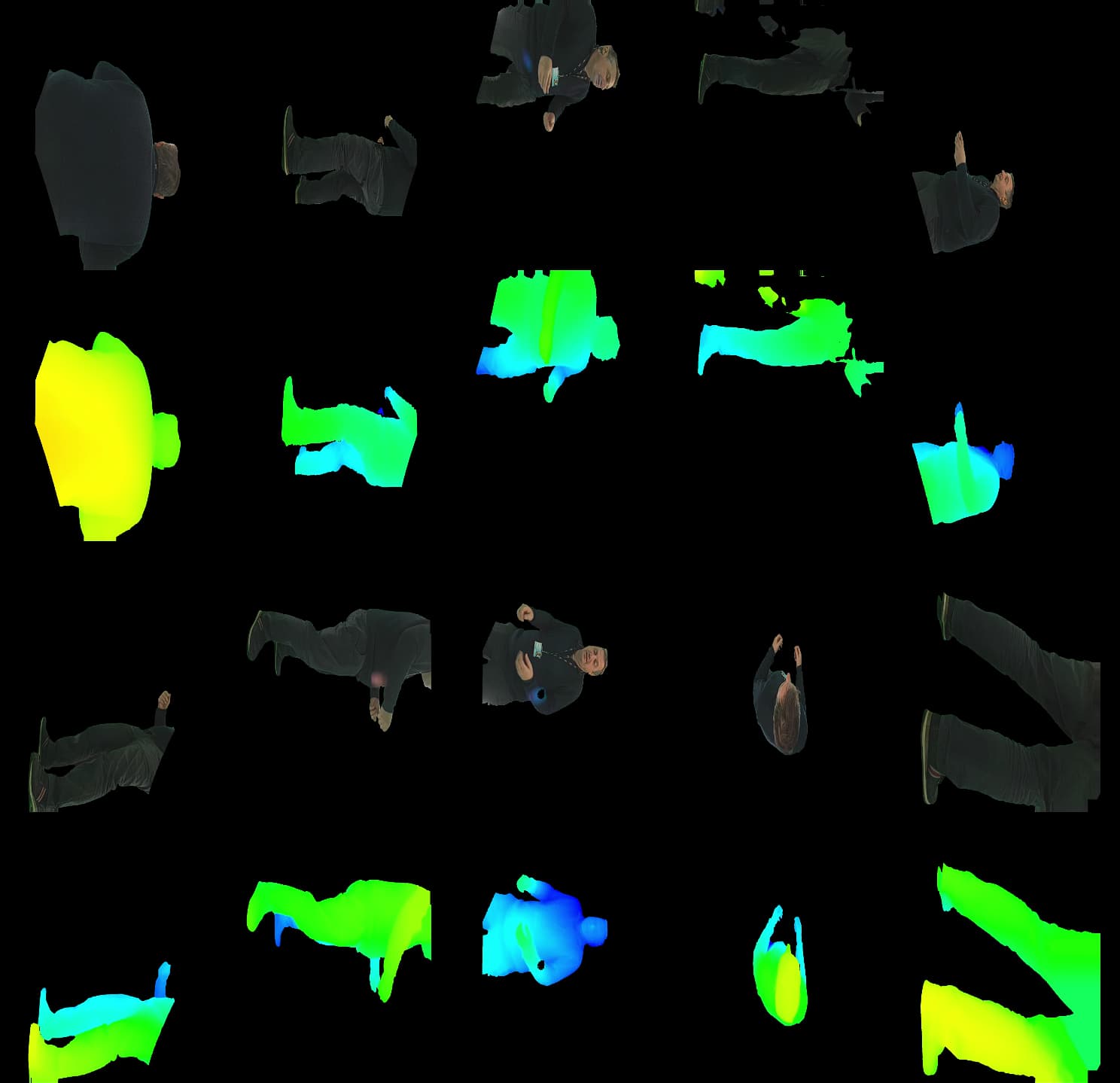

It looks like the primary cause of these holes in the asset is the holes in the mattes generated by the AutoMatteProcessor. Although this is a useful tool for quickly generating mattes, the results aren’t always perfect, and any parts of the background that the algorithm has trouble distinguishing from your subject will create black blotchy areas like the ones seen in your screenshots. I suspect what’s happening here is that the algorithm can’t differentiate between your subject’s dark clothing and a shadowy area of the background.

For future shoots, dressing the background with neutral solid backgrounds will aid the AutoMatteProcessor in making higher quality mattes.

For this material which has already been recorded, there are two ways to recover the areas of your asset with holes in them.

Repair the Mattes: Depthkit’s Refinement process assumes that the mattes perfectly segment your subject from the background, so if there are holes in the matte, the refinement settings are not going to recover any data that’s blacked out. Outside of Depthkit, in compositing software like Resolve/Fusion or After Effects, you can use tools like difference/delta keyers and rotoscoping tools (e.g. After Effects’ Rotobrush) on the original footage, or even tracking solid white shapes onto the mattes themselves, and then feed the new versions back into Depthkit and re-export the asset.

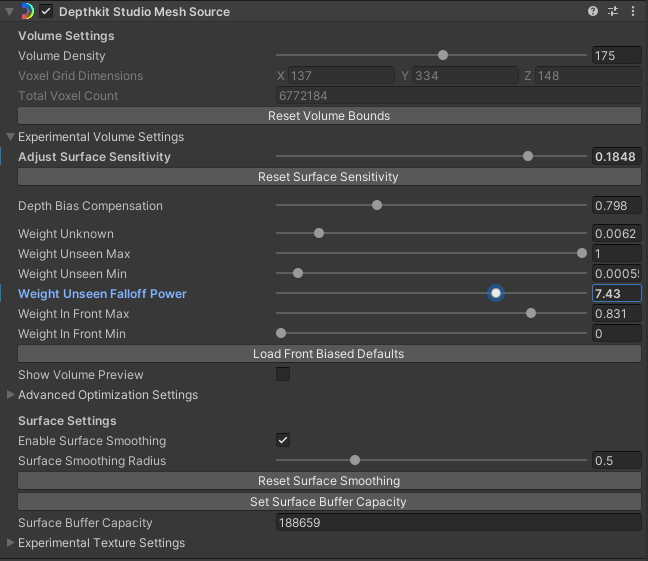

Mesh Reconstruction Settings: This is less effective than fixing the mattes, but in the Unity object’s inspector, under the Depthkit Studio Mesh Source component, you’ll find these settings:

The settings which have the greatest effect in filling the kind of holes are to reduce the ‘Weight Unknown’ and ‘Weight Unseen Falloff Power’ values. Reduce them, and then adjust the ‘Adjust Surface Sensitivity’ slider to taste - However, doing all of this make the renderer more likely to render extraneous geometry around your subject.

Also one side note: It looks like the Surface Smoothing on your asset is set very high. We recommend setting this between 0.3 and 0.5, and then using the other mesh reconstruction settings listed above to get rid of loose geometry.

Let me know if you have any further questions.