Hi Jillian,

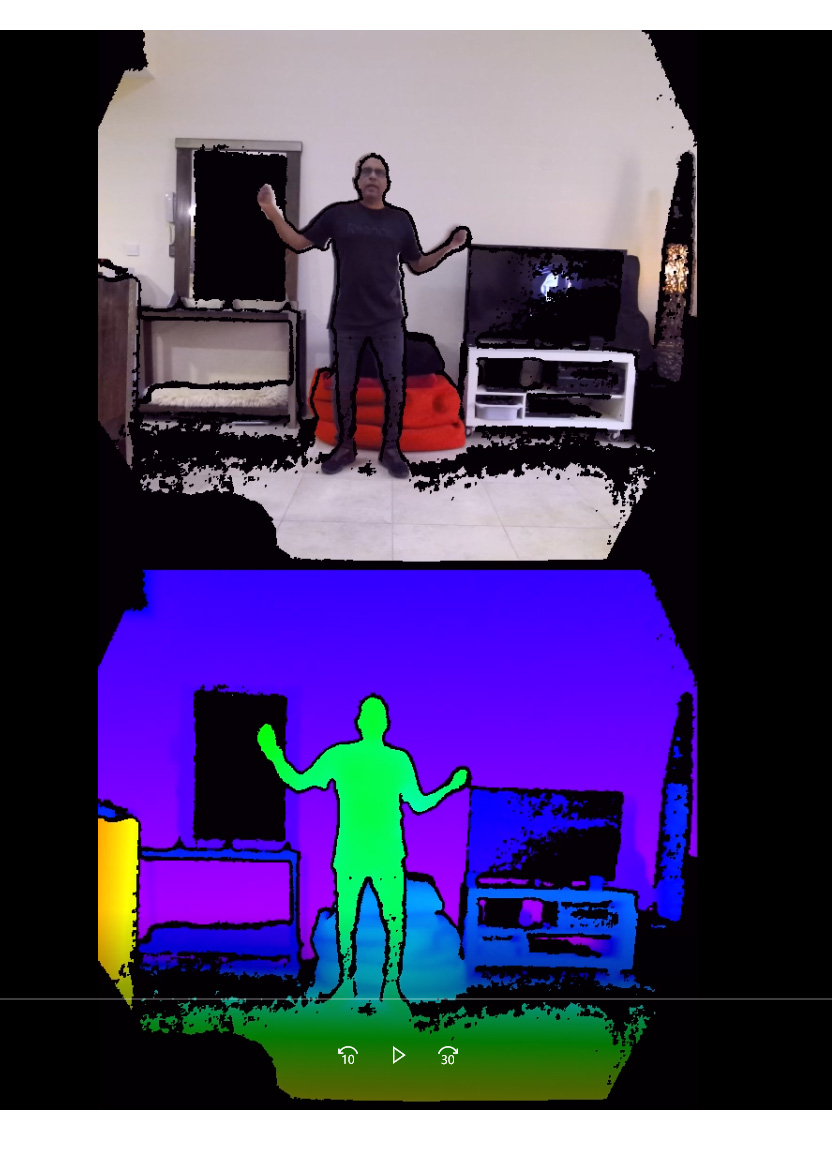

I was a bit late in replying, but now seriously evaluating the Kinect route versus depth-from-stereo (depthmaps) for volumetric filmmaking with pesudo-6Dof effect.

Both approaches have their pros/cons, but I feel overall, i’m biasing more toward the depthmap from stereo approach.

I’ll end up possibly using the AzureK workflow only for green screen human composites, wherein the original depth map captured from the AzureK (even via their own krecorder app) will be converted to gray scale.

Through trial and error, I’ve managed to massage a good gray-scale depth map out of the original 16bit depth maps from the kinect.

Suggestion:

I see a way to keep subscriptions flowing for DepthKit Pro by adding the following features:

-

Adding ability to export depth map as standard grayscale 8 bit format (white for near black for far)

-

script/shader for unity under the depth kit plugin to output a whole scene in the Plasma/color depth style, to then be imported back into the DepthKit pro to be manipulated and/or converted to Grayscale depth

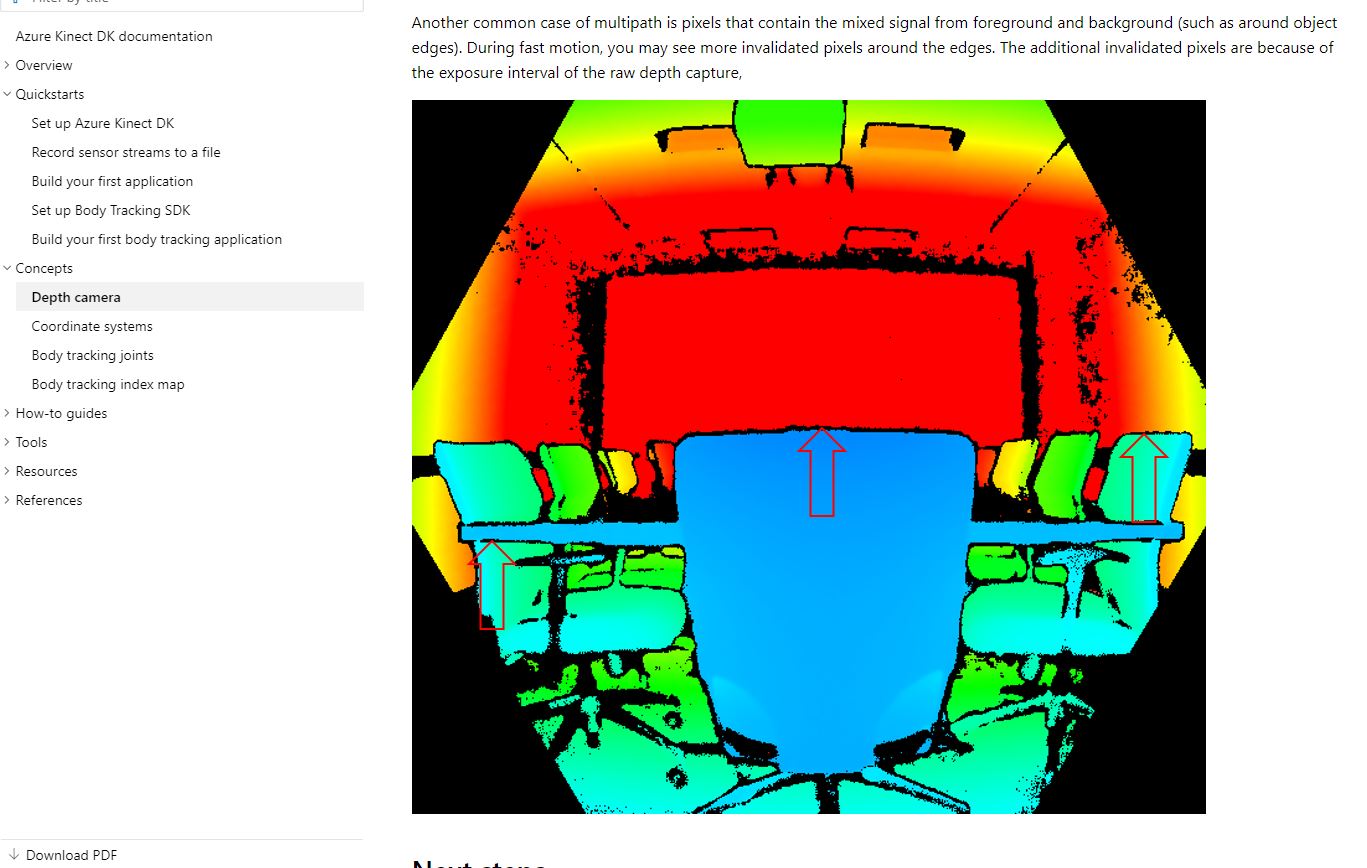

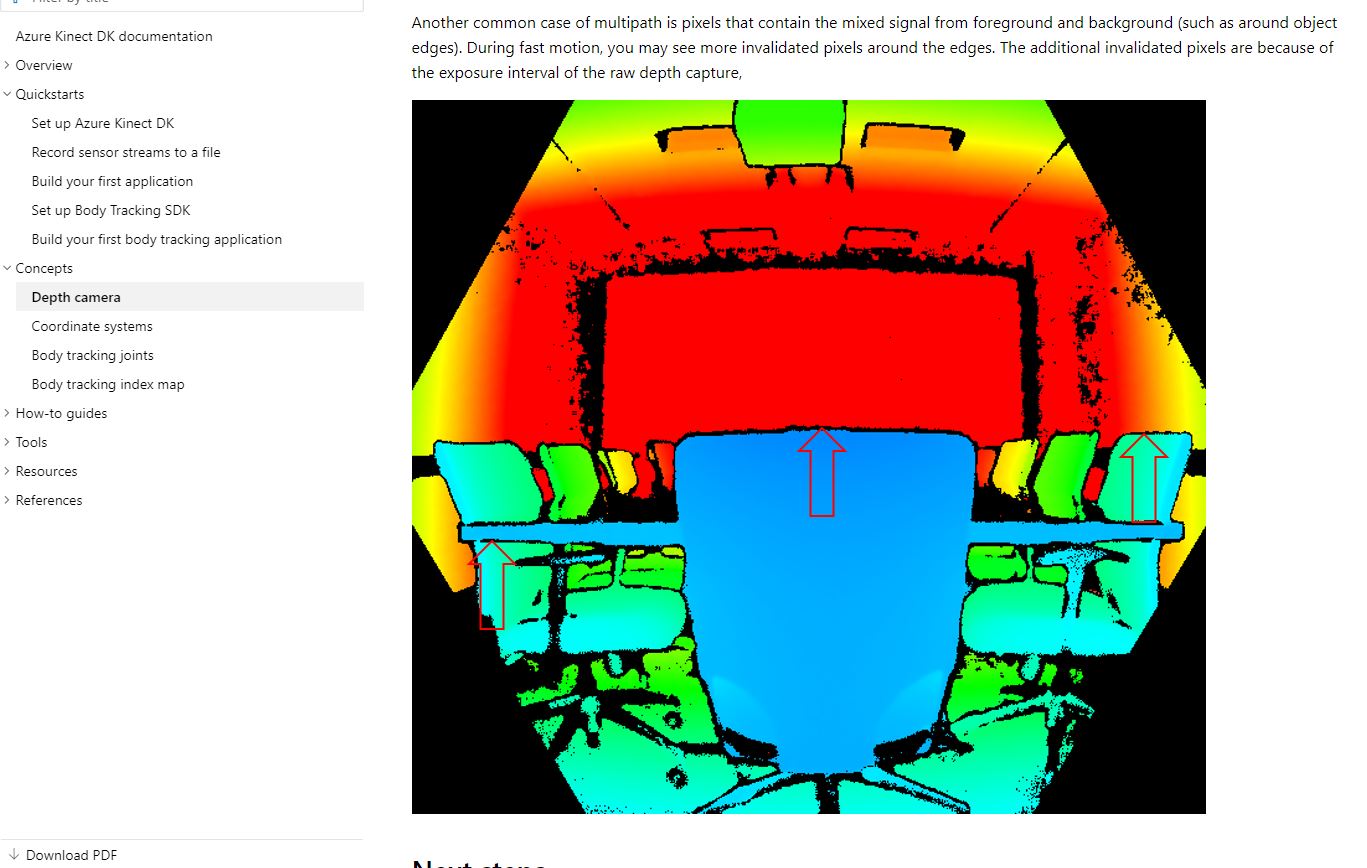

Meanwhile, to the original “border edges” issue, I’ve found out from Azure Kinect’s own site, that the problem stems from invalidated pixels caused by exposure interval in the raw capture.

What they don’t tell you, is how to fix/mitigate this. Which, in essence severely cripples the use of any environment capture with the Kinect. (the resulting video will have ghastly edges at wrong depth)

Image attached from Kinect website